Key Findings

Key Findings

Labor, data, software, and infrastructure all belong in the model. If your calculator only tracks sending platform fees, it's a software quote, not an ROI forecast.

Reply rates, meeting conversion, and close rates should come from your own campaigns, not benchmark tables. Borrowed numbers produce borrowed forecasts that break under pressure.

Signal-based targeting pushes reply rates to 5-8%. Above $150 cost per meeting means your targeting, deliverability, or tooling needs an audit before you scale.

High replies without meetings, meetings without pipeline, and pipeline without revenue all point to different problems. Read the funnel in order instead of celebrating the headline number.

Fix the offer before the copy, the sequence, or the calculator. Prospects get cold emails every other day. The message has to be too good to ignore.

An in-house SDR runs $60K-$120K per year plus tooling and ramp time. A done-for-you engagement consolidates that into one operating line with execution risk transferred. Both can work. Only one is usually counted honestly.

Most advice on a cold email ROI calculator is backwards. People obsess over the tool, then feed it fantasy numbers.

That's why the forecast looks clean and the campaign still loses money.

A calculator doesn't fix bad assumptions. It just makes them look more official. If you want a forecast you can trust, you need to pressure-test the inputs, understand which metrics actually move revenue, and stop counting vanity metrics as progress.

Across 50+ B2B clients and 400+ campaigns, the pattern is the same. Teams walk in with a spreadsheet that says "profitable" and a pipeline that says otherwise. The gap is always in the assumptions.

The Inputs Most ROI Calculators Get Wrong

Most ROI models fail before the first email goes out. The math isn't the issue. The assumptions are.

Founders usually undercount costs and overrate performance. Sales teams do the same thing when they want budget approval fast. That's how you end up with a spreadsheet that says "profitable" while the campaign burns time, tools, and sender reputation.

Count the costs people usually hide

If your cold email ROI calculator only includes sending software, it's wrong.

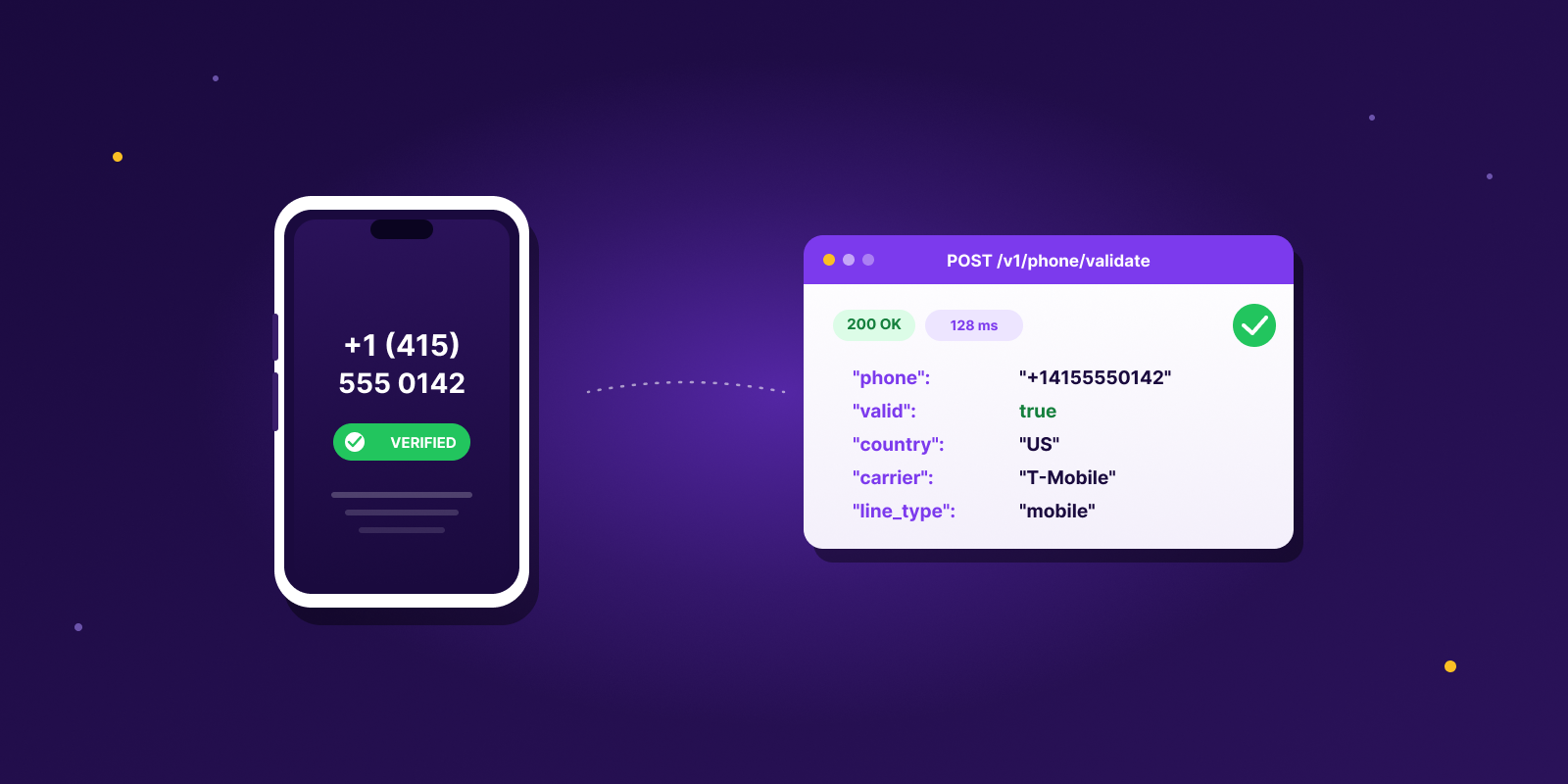

The total cost model includes labor, data, software, and infrastructure. Sparkle breaks that down as labor at $25-50/hour, data at $0.10-0.50/lead, software at $50-200/month per seat, and infrastructure such as domains at $12-32/year plus mailboxes at $4-6/month.

Here's where teams usually get sloppy. Labor gets ignored. Someone still has to build lists, clean targeting, write copy, manage replies, and hand off meetings. Bad data gets treated like free data. Cheap lists look efficient until bounce risk, irrelevant contacts, and wasted rep time show up later. Tool sprawl sneaks in. Clay, Smartlead, HeyReach, LinkedIn steps, inbox management, enrichment, and verification all add cost even when each line item looks small. Infrastructure gets forgotten. Outreach domains and mailboxes are not optional if you care about deliverability.

Practical rule: if the calculator doesn't include the cost of the humans touching the campaign, it's not an ROI model. It's a software quote.

Data quality changes the whole forecast

Garbage in, garbage out becomes obvious fast.

A bad prospect list ruins reply quality, meeting quality, and deliverability at the same time. That's why data hygiene isn't some ops side quest. It sits inside the ROI model itself. Trackingplan's piece on data quality solutions frames it as a systems issue, not just a list-buying issue, which is the right way to think about it.

A lot of teams also skip enrichment depth. That's a mistake. If you're still working from flat contact exports, our guide on B2B data enrichment is worth reviewing before you trust any forecast.

Build a cost model you can actually use

You don't need a monster spreadsheet. You need an honest one. Start with a simple structure.

| Cost bucket | What belongs in it | Why it matters | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Labor | List building, sequencing, inbox handling, qualification, meeting handoff | This is where "cheap outbound" stops being cheap | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Data | Sourcing, verification, enrichment, signal tracking | Better targeting saves wasted sends and wasted meetings | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Software | Sending platform, enrichment tools, workflow tools, LinkedIn automation | Tool costs compound fast when multiple people touch the campaign | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Infrastructure | Domains, mailboxes, warmup, deliverability monitoring | Ignore this and your send model won't survive real-world delivery |

| Input or outcome | Value | What moves it |

|---|---|---|

| Emails sent per month | 1,000 | Sending capacity, domain health, mailbox count |

| Reply rate | 3% | Targeting, signal use, copy, subject line |

| Meetings booked | 15 | Reply handling, CTA quality, qualification flow |

| Close rate | 25% | Sales execution, lead fit, offer strength |

| Deals signed | 3.75 | Volume and close rate compounded |

| Average deal size | $15,000 | What you actually sell |

| Monthly revenue | $56,250 | All of the above working together |

| Campaign cost | $50,000 | Full cost model, not just software |

| ROI | 180% | Honesty of every input above |

The useful part of a cold email ROI calculator isn't the final percentage. It's seeing which assumption is carrying the whole model.

What to plug into your own calculator

Don't copy benchmark numbers just because they look reasonable. Use them as a reference point, then replace them with your own operating reality.

Your inputs should come from things you can defend. Email volume based on current sending capacity. Reply rate from recent campaigns, not wishful thinking. Meeting conversion based on real inbox handling and CTA quality. Close rate from your sales process, not someone else's. Deal size based on what you sell. Total cost from the full model, not just subscriptions.

If one of those numbers is weak, that's useful information. It tells you where the work is. Our ROI calculator is set up to let you test each layer independently instead of handing you one clean number that hides the weak spot.

What Good Looks Like: Benchmarks and Sensitivity Analysis

A forecast means nothing without context. You need to know whether the inputs are normal, weak, or inflated.

At this point, many outbound teams fool themselves. They see one decent campaign, assume that result is standard, and build hiring or budget plans around it. Then the next quarter lands and the model breaks.

Benchmarks that deserve attention

Leads at Scale notes that industry averages often sit around 1-3% reply rates, with a healthy B2B cost per meeting of $50-$100. If your CPM goes above $150, that's a sign to audit deliverability, targeting, or platform pricing. The same source puts an in-house SDR at $60K-$120K annually before they book a single meeting.

That gives you a useful baseline. Not a promise. A baseline.

Reachly's own campaigns tend to run hotter on reply rate because we lead with signal-based targeting instead of flat lists. The Primal campaign hit an 8% positive reply rate month one across signal-triggered segments like recent funding, hiring for marketing roles, and decreasing organic traffic. Multichannel replies across email, LinkedIn, and cold calling consistently land in the 8-15% range for us versus the 2% teams see with generic templates.

| Metric | Useful benchmark | What it tells you |

|---|---|---|

| Reply rate | 1-3% baseline, 5-8% with signal targeting | Whether your targeting and message are earning attention |

| Cost per meeting | $50-$100 | Whether the campaign is economically healthy |

| CPM warning zone | Above $150 | Whether your model needs fixing before you scale |

| Bounce rate | Under 3% | Whether your data and verification stack is working |

| In-house SDR cost | $60K-$120K annually | What you're comparing outbound economics against |

Sensitivity matters more than averages

Averages are fine for orientation. Sensitivity analysis is what keeps you honest.

You don't need fancy finance language here. Just test what happens when one assumption gets worse. Then test what happens when one gets better. If replies come in at the low end, does the campaign still work? If meetings stay flat but costs rise, are you still comfortable with the payback? If CPM drifts past the healthy range, is the problem copy, list quality, or tooling? If your in-house SDR alternative costs more, does it also create better pipeline, or just more overhead?

A forecast should survive a bad month. If it only works when everything goes right, it isn't a forecast. It's a hope document.

What good actually looks like

Good doesn't mean "highest reply rate." That's too narrow.

Good means the campaign books meetings at an acceptable cost, hands sales conversations that can close, and stays stable enough that you can repeat it next month without repairing the whole setup. If one metric looks amazing while the next stage collapses, the model isn't good. It's just noisy. Our breakdown of cold email response rates goes deeper on what healthy ranges look like across industries.

Reading the Results Beyond the Final Number

A positive ROI can still hide a bad campaign.

That sounds strange until you see it in practice. Teams celebrate activity, then wonder why revenue doesn't follow. A cold email ROI calculator should help you spot that mismatch early, not cover it up with one nice-looking percentage.

Vanity metrics can still waste money

High opens don't mean the offer is relevant. High replies don't mean the campaign is healthy either.

Sometimes the replies are low intent, price-shopping, or confused. Sometimes the copy got attention but the CTA didn't convert to meetings. Sometimes the meetings happen, but sales rejects them because the targeting was off from day one.

Read the result stack in order. Replies without meetings usually point to weak CTA structure or reply handling. Meetings without pipeline usually point to poor qualification, which is why how to qualify leads matters more than people admit. Pipeline without closed revenue can point to sales execution, bad-fit accounts, or a long buying process that your attribution model ignores.

Attribution is where teams undercount or overcount

Outbound rarely works as a single-touch channel. A buyer might reply to an email, view a LinkedIn profile, take a call later, and only convert after several follow-ups.

If you credit all revenue to the last meeting or all revenue to the first email, you're distorting the picture either way. For long sales cycles, you need to look at pipeline value and progression, not just closed-won deals that happen months later.

That's one reason final ROI often arrives too late to be useful. Operators need leading indicators they can act on while the campaign is still live. Watch where the funnel stalls. That tells you what to fix long before the quarter ends.

Bad messaging can poison the result before the model starts

One easy mistake is stuffing the email itself with ROI claims.

Gong analyzed over 132,000 emails and found that using specific ROI language such as "2x your revenue" tanks success by 15% because the claim lacks context and proof. That's a useful lesson for forecasting too. If your message promises business impact in a generic way, prospects don't buy it, and your calculator assumptions won't matter.

So when you review results, ask blunt questions. Did the campaign create real sales conversations? Did those conversations match your ICP? Did the pipeline move fast enough to justify the spend? Did the copy create curiosity, or did it just make claims?

A clean dashboard can still lie. The follow-through usually doesn't.

Levers You Can Pull to Change Your ROI

If the numbers look bad, don't scrap the whole channel yet. Fix the variables you control.

Most ROI improvement doesn't come from one heroic rewrite. It comes from changing the parts of the system that shape response quality, meeting quality, and cost control.

Tighten who you target

Better targeting fixes more problems than better writing.

When the list is too broad, you pay for it everywhere. More wasted sends. More weak replies. More meetings that never had a chance to close. Narrowing the ICP usually makes the whole funnel easier to read because the signal gets cleaner.

This is where signal-based outbound changes the economics. Instead of blasting a static list, you trigger outreach when something changes at the account. Recent funding, new leadership hires, hiring sprees in a specific department, competitor tool uninstalls, decreasing organic traffic. We run those signals through Clay so the data flows straight into sequences with live variables, not stale CSVs.

Rewrite the CTA before you rewrite everything else

A lot of campaigns don't have a copy problem. They have an ask problem.

If prospects reply but don't book, the CTA is often too vague, too early, or too demanding. A direct, low-friction next step usually beats an email that asks for too much commitment upfront. Pressure-test the ask. Is this one clear action? Does the next step feel small enough to say yes to? Would the buyer understand why this meeting is worth taking? Does the email sound like a person wrote it?

Follow-up structure changes economics

Single-email campaigns leave money on the table. Most real buying intent doesn't show up on touch one.

What works is a sequence with different angles, not the same bump repeated five times. One follow-up can add context. Another can sharpen relevance. Another can make the ask easier. LinkedIn and phone can support the sequence if the account matters enough. Our guide on automated email follow-ups goes deeper on what actually gets replied to versus what just clutters the inbox.

The first email gets noticed. The follow-up often gets the reply.

Protect deliverability like it's part of revenue

It is.

If inbox placement weakens, your ROI model becomes fiction because the volume in the spreadsheet no longer matches the volume reaching real buyers. Dedicated domains, mailbox rotation, verified data, and careful pacing are boring compared with copywriting. They also keep the whole machine alive. We run Smartlead for sending and Zapmail for domain infrastructure so the sending environment is isolated from the client's primary domain. Our full email deliverability guide walks through the setup in detail.

Here's how each lever maps to the metric it actually moves, and what we see break most often when clients come to us from in-house setups.

| Lever | Moves | Common failure mode | What Reachly does about it |

|---|---|---|---|

| Targeting | Reply quality | Broad ICP, flat lists, no signals | Signal-triggered segments in Clay, variables baked into copy |

| CTA | Meeting conversion | Interest without commitment | Low-friction asks tested per segment, no "quick call" filler |

| Sequence | Total response volume | Stopping after one or two touches | Multichannel sequence across cold email, LinkedIn, cold calling |

| Deliverability | Actual inbox reach | Sending from main domain, no warmup, no rotation | Dedicated domains via Zapmail, Smartlead warmup and rotation |

| Qualification | Pipeline quality | Sales gets meetings they can't use | Pre-qualification layer before meetings hit the calendar |

How Reachly coordinates this at scale: every lever runs through one workflow instead of five disconnected tools. The client's team sees booked meetings on their calendar, not dashboards they have to manage.

The point of a cold email ROI calculator isn't to admire the current number. It's to decide which lever deserves attention next.

How a Done-For-You Service Changes the Math

DIY outbound looks cheaper until you count everything. Then the comparison gets less flattering.

When you run it in-house, your model includes tool subscriptions, list sourcing, copy, mailbox management, reply handling, qualification, and the time required to keep the whole system from slipping. You also own the execution risk. If targeting is weak or deliverability drops, your forecast may still look fine while performance deteriorates.

A done-for-you service changes the shape of the model. Your cost usually becomes one predictable operating line instead of a stack of fragmented costs. The assumptions can change too, because the team running the work already has workflows for data sourcing, sequencing, reply management, and qualification.

That doesn't mean "agency" automatically means better. Some agencies just repackage tool access and junior labor. The useful comparison is simpler. What inputs change, who carries the operational risk, and how quickly you can get to a stable campaign. Our guide on outsourced lead generation services breaks down what separates operators from resellers.

| Input | In-house DIY | Done-for-you (Reachly) |

|---|---|---|

| Team cost | $60K-$120K/year per SDR, plus manager time | One monthly operating line starting at $3,500/month |

| Tool stack | Clay, Smartlead, HeyReach, Zapmail, verification tools, all paid separately | Included in engagement, already configured and running |

| Ramp time | 3-6 months before an SDR is productive | Campaigns live within 2-3 weeks of contract signing |

| Execution risk | On your team, surfaces in missed targets | On the agency, surfaces in performance reviews |

| Infrastructure | Your team sets up domains, warmup, deliverability monitoring | Dedicated sending domains, never your primary domain |

| Reply and qualification | SDR splits time between prospecting and inbox | Dedicated inbox team, pre-qualified meetings to calendar |

What this looks like when it works: Primal came to us burning paid ad budget for every lead. We ran an evergreen outbound campaign plus four signal-based plays across hiring for marketing roles, recent funding, decreasing traffic, and companies not ranking on page one of Google. Six months in: 85 SQLs, 6 new deals signed, 8% positive reply rate, 35% CAC reduction, break-even at month three, 4.57x ROI. That's the kind of result a cost model tells you is possible but only delivers if the execution holds up across every layer of the funnel.

Plug an agency proposal into your calculator the same way you'd plug in an internal plan. Use the full cost, expected meeting output, and sales conversion assumptions you can defend. Then compare it against your DIY model without hiding labor or risk on either side.

If you want to pressure-test your own numbers instead of guessing, our ROI calculator maps expected meetings, pipeline, and revenue from your outbound assumptions. Use it like an operator would. Start with conservative inputs, include the actual costs, and see whether the model still works before you scale. If the answer is "maybe," that's usually a targeting or offer problem, not a calculator problem, and our cold email agency page walks through how we handle both.

Get more meetings with the people who matter, 100% done for you.

We don't spray and pray. We use real buying signals to reach the right people at the right time, then run coordinated outreach across email, LinkedIn, and phone with messaging that earns replies.

Get Started